Deploying Highly Available HashiCorp Vault in Azure Australia

Posted

This blog will cover the high-level decisions required to deploy HashiCorp Vault into Azure in Australia. While it might sound straight forward, there are some regional gotchas to be aware of - especially when trying to deploy something that would be described as a Tier 1 service.

Working with a local customer who was looking to deploy Vault, they set about a few guidelines:

- Follow best practice

- Architect for availability, but also be aware of cost implications

- Uptime SLA of 99.999%

- Can only be deployed to Azure Australia regions

So, we set about working on this like any other deployment…

- Follow the guide

- Find the right Terraform module

terraform apply- All done!

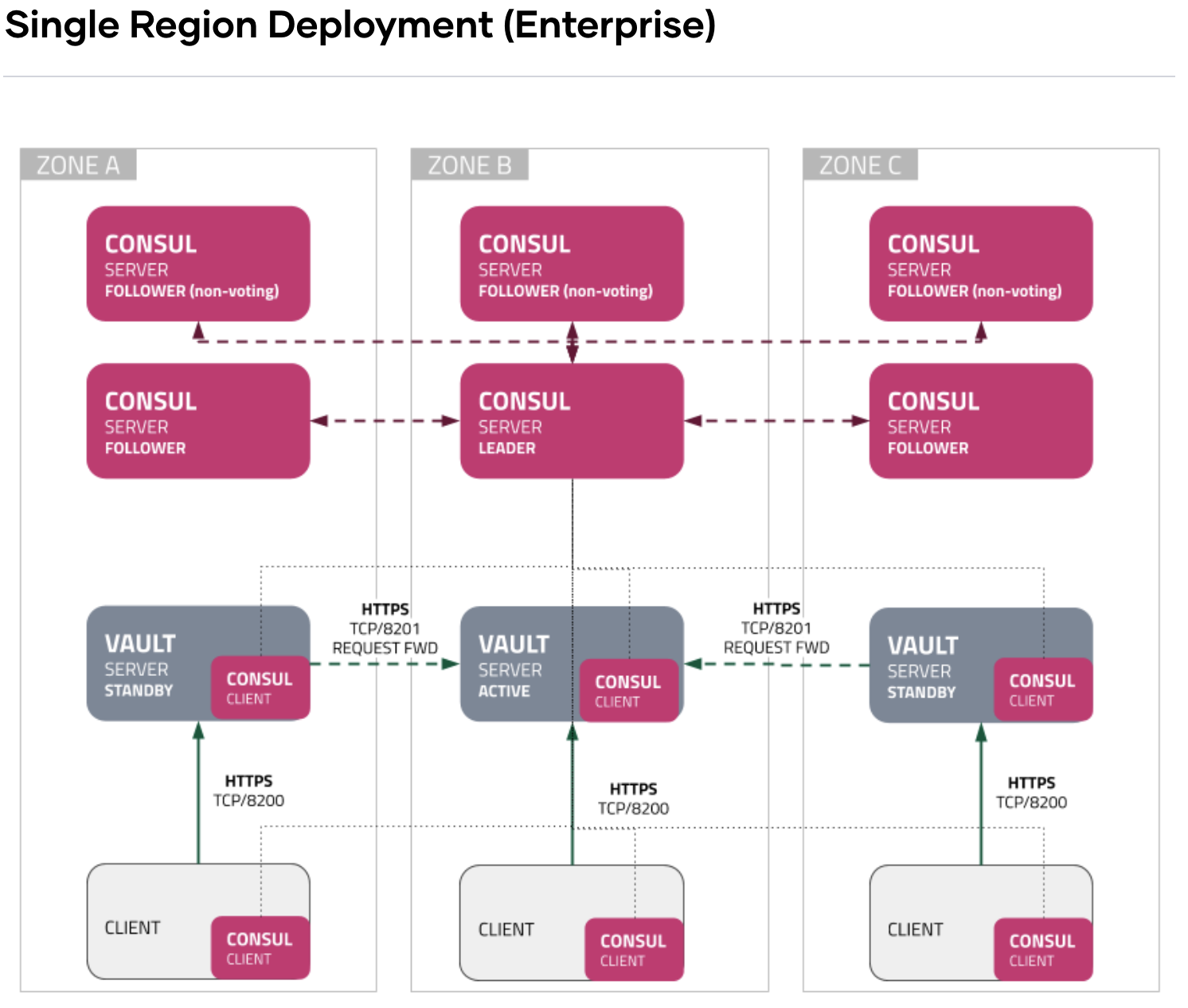

A quick google search will land you on the HashiCorp Vault Reference Architecture page where the recommended architecture is within a single region, spread across 3 Zones. Ideally, there are 3 Vault nodes and 6 Consul nodes.

Awesome… So far, so good!

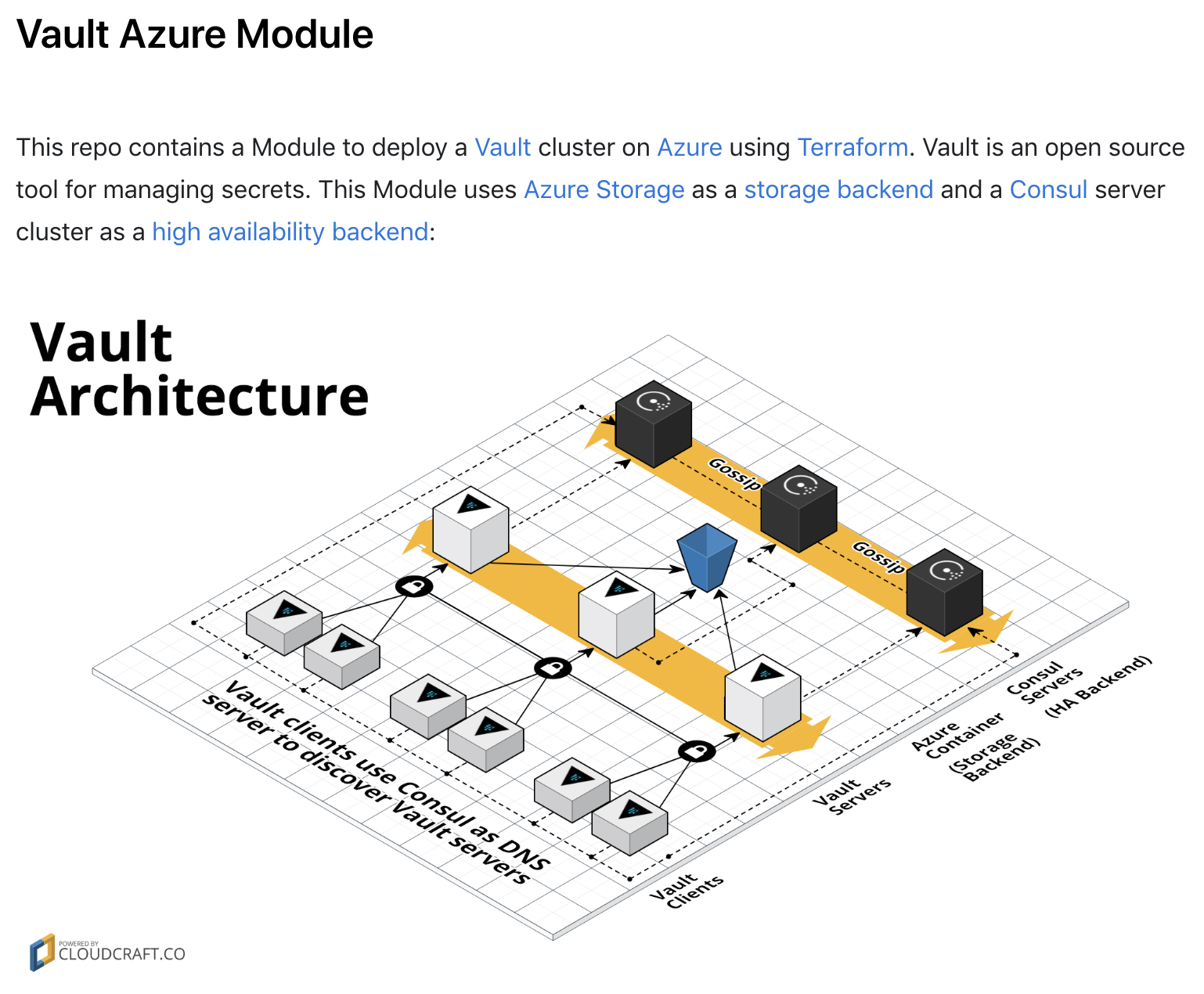

So now that we agree with the best practice approach to deploy, let’s find the matching Terraform Module. This is great! The module has the same architecture as that required and defined in Step 1.

Now we’re all set…

#terraform apply -auto-approve

So we used the module, deployed locally (AU) and started hitting some issues. Not so much that the module didn’t work, or that Vault wasn’t available - it’s just that it didn’t meet the uptime required. So, we started digging a little deeper…

We went back to basics and took a look at the azure architecture:

- What region are we using?

- What Availability Zones are in our region?

- How can we best use them to get the SLA we wanted for Vault

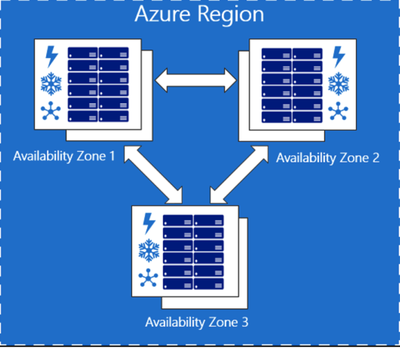

A quick look at Microsoft documentation and we can easily see that Azure supports AZ’s:

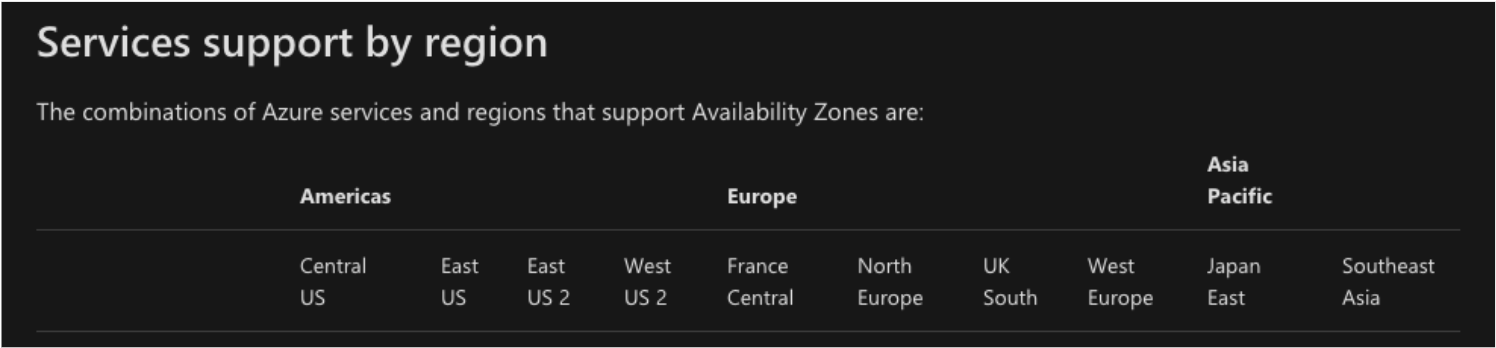

Awesome - now lets see what regions are available in Australia:

Great - there are lots of regions to choose from!

But.. hang on, Australia isn’t even listed on the supported regions for Azure AZ’s. WTF!

Ok - so whats next? If we can’t use Availability Zones, then what is available for each region, and what can we use?

Azure has the concept of Availability Sets which is a group of two or more virtual machines in the same DC - offering 99.95% Availability SLA.

So, we can, therefore, deploy a few Vault VMs, and place them in an Availability Set. Each VM within the Availability Set is assigned an Update Domain and a Fault Domain. Fault Domains define the virtual machines that share a common power source and network switch.

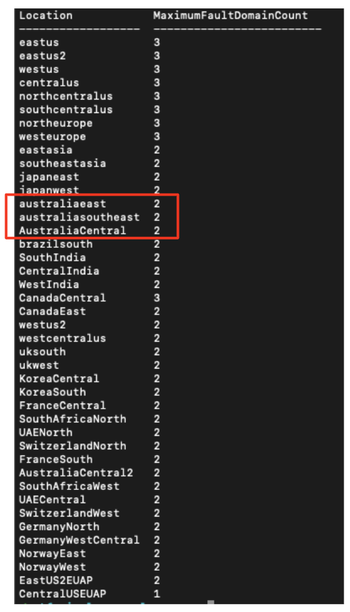

So, how many Fault Domains do Azure Regions in Australia have? It’s not so easy to find, but the answer is somewhat surprising:

Here’s the same command you can run yourself in Azure CLI:

az vm list-skus --resource-type availabilitySets --query '[?name==`Aligned`].{Location:locationInfo[0].location, MaximumFaultDomainCount:capabilities[0].value}' -o Table

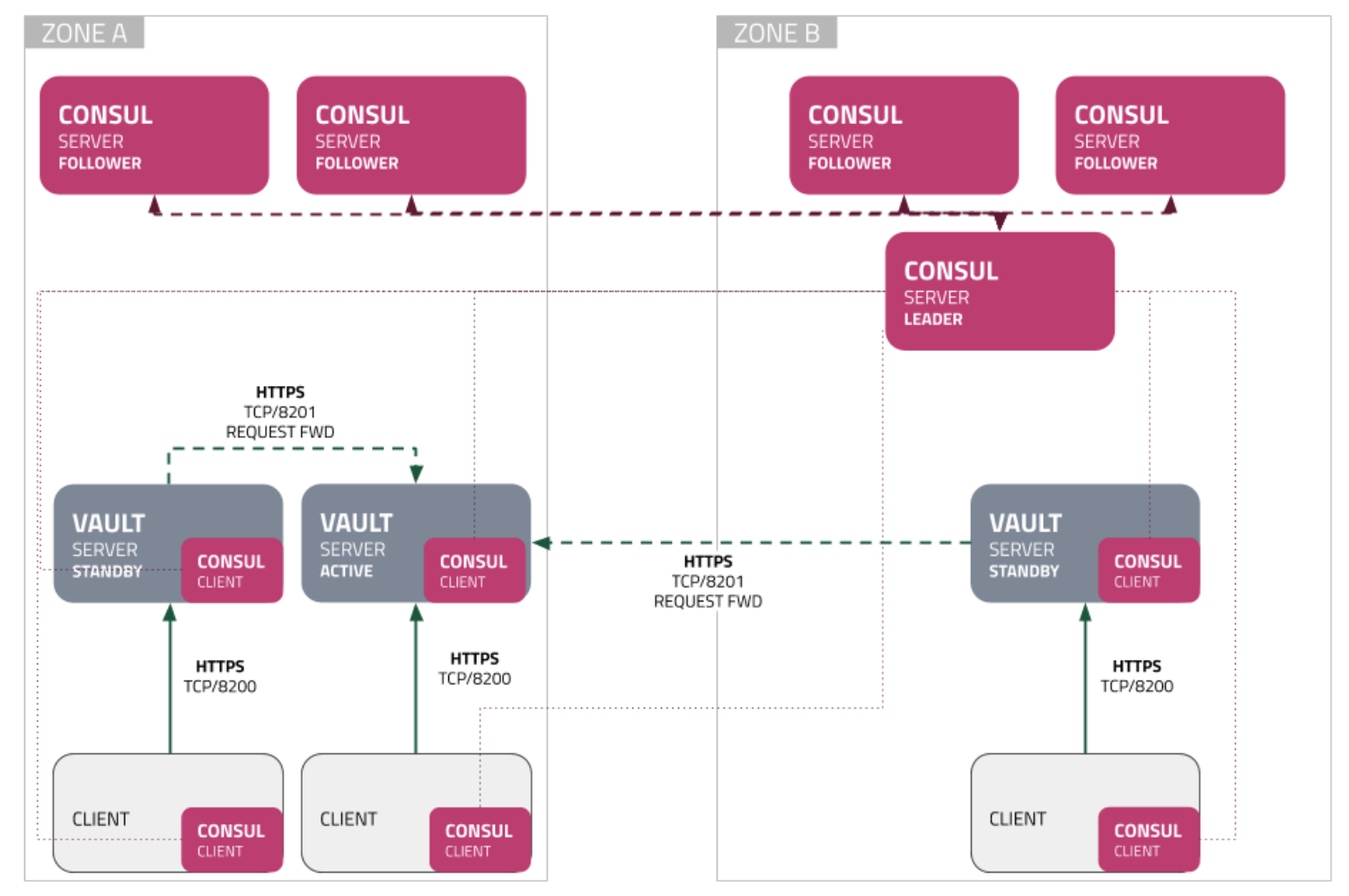

So basically, there are only 2 fault domains to operate across for the region. A deployment might look like this:

(Where Zones = Fault Domains)

This is ok for something not in production. However for an enterprise deployment, this would fail as it only provides partial redundancy. If Zone B (ie Fault Domain 2) were to fail then the Consul cluster would not be quorate and so would also fail.

In theory, we could stretch this cluster over a couple of Azure Regions, therefore providing the redundancy required.

Let’s take a deeper look…

Consul can be performance sensitive. Leader elections happen when heartbeat timeouts are exceeded. There is a tradeoff between extending these; long timeout gives longer time to recover vs, short timeout yields leader stability issues.

The value of the raft_multiplier is a scaling factor and directly affects the following parameters

| Param | Default Value |

|---|---|

| HeartbeatTimeout | 1000ms |

| ElectionTimeout | 1000ms |

| LeaderLeaseTimeout | 500ms |

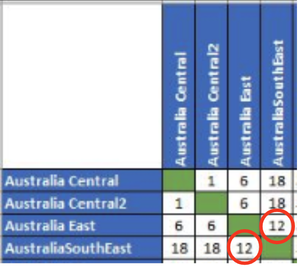

Azure provides best case (DC to DC) latency figures. As you add firewalls, network endpoints, routers etc these numbers will only get worse.

The problem was - the customer had experienced figures much worse than those published. So this idea was scrapped.

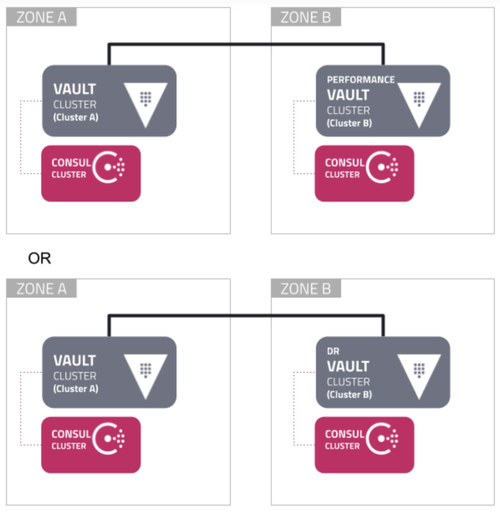

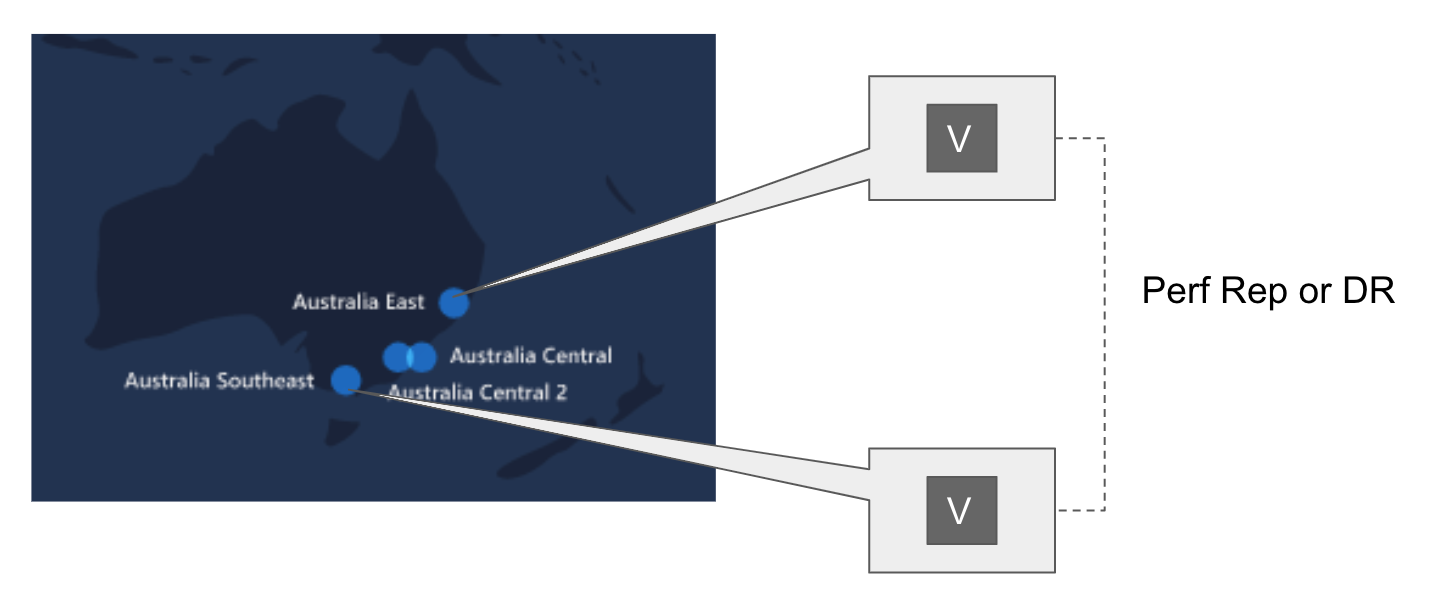

Ultimately, the best solution to achieve the required SLA for the region is to have a Vault Cluster in each Fault Domain for the required region. These two clusters could then replicate configuration and data via either performance replication or disaster recovery replication:

You could also replicate from a Vault Cluster in region Australia East to a separate cluster in region Australia Southeast.

For a production Tier 1 App - this is certainly the best solution to technically solve the problem, but it might not be the best financially. I spoke to Azure architects (offline) about the problem, and they have suggested Azure AZ’s could be in-country before the end of 2020. This would certainly solve the problem for Vault and other HashiCorp tools.

Another alternative could be to deploy Vault in managed Kubernetes (AKS), but that only raises more questions and concerns (security, non-K8s apps, deployment, uptime of the service, etc). Each customer would have to weigh up all these to come to a decision that meets all their requirements.